An AI software development approach that drives RAG PoCs to success

AI software development carries challenges that traditional software work does not. How exaBase Studio enables incremental AI software development and helps PoCs land successfully.

Introduction

AI-powered software is being adopted into core enterprise operations, especially since ChatGPT emerged in 2022. AI software development and adoption projects, however, carry difficulties that traditional software projects do not. From a project practitioner's perspective, this article shares one of our approaches to lowering project risk and raising the success rate.

AI software tends to be more complex than traditional software in many dimensions — data volume, processing time, and probabilistic behavior. Although AI is only a small part of the whole system [1], understanding the AI's performance and locking down its specification ends up driving most of the system's overall specifications. Verifying the AI as a PoC (proof of concept) at the very start of implementation is therefore extremely important.

And yet AI PoCs face many difficulties [2] — meeting accuracy targets, sourcing sufficient data in quantity and quality, defining evaluation criteria, and securing buy-in from both the field and leadership. Neglecting these challenges leads directly to project delays and rework cost.

Incremental AI software development

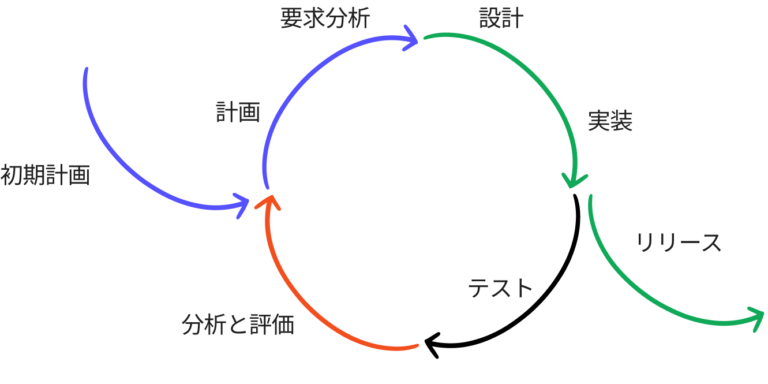

Drawing on years of experience and lessons from AI projects, we built exaBase Studio — an AI software development and operations foundation. In recent projects we practice "incremental AI software development" using exaBase Studio. Incremental development means starting with a working piece of software, evolving its various parts on separate schedules, and integrating each as it completes. The approach traces back to top-down programming and functional expansion as proposed by H. Mills [4][5], and is carried forward by the Principles behind the Agile Manifesto [3].

Incremental development pays off in production software work and in AI PoCs alike. Many traditional PoCs end with only a written report. With exaBase Studio, you can build and deploy a demo app that lets users experience AI inputs and outputs relatively easily. For popular use cases such as RAG, templates let you deploy something that already works early, then add and verify features incrementally — that is, "incremental AI software development" in action.

This approach lets domain-expert users evaluate the work early, surfacing domain-specific issues that AI developers cannot easily spot themselves. As a result, the team can plan and apply countermeasures earlier in the project.

Smoother evaluation of AI systems

Reality is rarely that simple. Identifying issues presupposes that you can evaluate the AI system in question — and in AI PoCs, defining, measuring, and evaluating against the criteria are often the hard parts.

1. Defining evaluation criteria

Ideally, the criteria are simple enough for everyone to understand. But work that humans previously performed often has multi-dimensional evaluation axes that are hard to capture with a single metric. Sometimes complex metrics are unavoidable to capture the essence of the work — and not all stakeholders will easily understand them.

2. Measurement

For RAG in particular, when "Golden Data" is not yet in place, even measuring accuracy itself becomes difficult.

3. Evaluating the result

Take RAG: precision, recall, and similar retrieval metrics are common. Defining them is not hard, but accuracy is rarely 100%. Whether 95%, 60%, or 70% accuracy is good enough for business use is hard to judge on paper. With more complex metrics, it is even harder.

Providing a demo app early lets users go beyond reading a PoC report and actually experience the AI's inputs and outputs directly. This makes evaluation smoother. Expected benefits include:

- Promoting understanding and broadening choices of evaluation criteria: even when criteria are complex, hands-on experience deepens understanding of the metrics. The criteria themselves should be objective and as easy to grasp as possible — providing a hands-on environment alongside expands the freedom to choose simple criteria.

- Avoiding blockers: preparing data, especially annotation, is always costly. RAG projects need annotated Golden Data for evaluation. This data is something to prepare from the start, but in some cases collection cost is extreme. Even then, hands-on experience with the AI's outputs can surface issues — and the project can keep moving while the data is being collected.

- Promoting AI evaluation: directly experiencing AI outputs makes it easier to grasp inputs and outcomes intuitively, beyond numerical evaluation. With RAG, even a "bad" answer from the chatbot has shades — completely unacceptable, partially acceptable, or acceptable with the right operational process around it. Hands-on consideration like this draws out richer insight from evaluation.

Promoting collaboration

Providing a demo app also makes user-developer collaboration easier — exactly the fourth Agile principle [3]: "Businesspeople and developers must work together daily throughout the project." Concretely, investment decisions and requirement clarification become smoother. Expected benefits include:

- Greater confidence and transparency in investment decisions: stakeholders and leadership can decide go / no-go for follow-on work with more conviction.

- Clearer production requirements: in PoC, the goal is just to experience and evaluate AI inputs and outputs; the demo app stays minimal. Hands-on experience makes very concrete discussions about production UI/UX possible.

Conclusion

In AI software development projects, verifying the AI in a PoC at the very start is essential to making the downstream software development succeed. AI software development surfaces challenges that traditional software projects do not — but adopting exaBase Studio addresses them effectively. The approach aligns with the principles behind the Agile Manifesto [3] and brings benefits including incremental development, smoother AI evaluation, and stronger collaboration.

References

- [1] D. Sculley et al. Hidden Technical Debt in Machine Learning Systems. NIPS, pages 2503-2511. 2015.

- [2] NEDO. Survey report on the use of artificial intelligence and machine learning in industry, and on the safety of AI technology. 2019.

- [3] Kent Beck et al. Principles behind the Agile Manifesto. https://agilemanifesto.org/principles.html. 2001.

- [4] H. D. Mills, Top-Down programming in large systems, Debugging Techniques in Large Systems, R. Rustin, ed., Prentice-Hall. 1971.

- [5] C. Larman and V. R. Basili, "Iterative and incremental developments. a brief history," in Computer, vol. 36, no. 6, pp. 47-56, 2003.